AI readiness assessments written for traditional enterprises don't fit organizations that already operate in short cycles. The scoring dimensions are different, the maturity levels compress, and the governance model has to evolve every quarter instead of being signed off once. This post gives leaders of already-agile organizations a specific framework to score AI readiness, a map from agile maturity to AI readiness, and the five gaps we see most often in the field.

Key Takeaways

- RAND research (August 2024) found more than 80% of AI projects fail, twice the failure rate of non-AI IT projects.

- Generic AI readiness frameworks from Microsoft, Cisco, and Gartner score six to seven pillars, but none treat iterative delivery as an input to readiness.

- Agile organizations get a head start on AI (cross-functional teams, short cycles, feedback loops), but new gaps appear in data and governance.

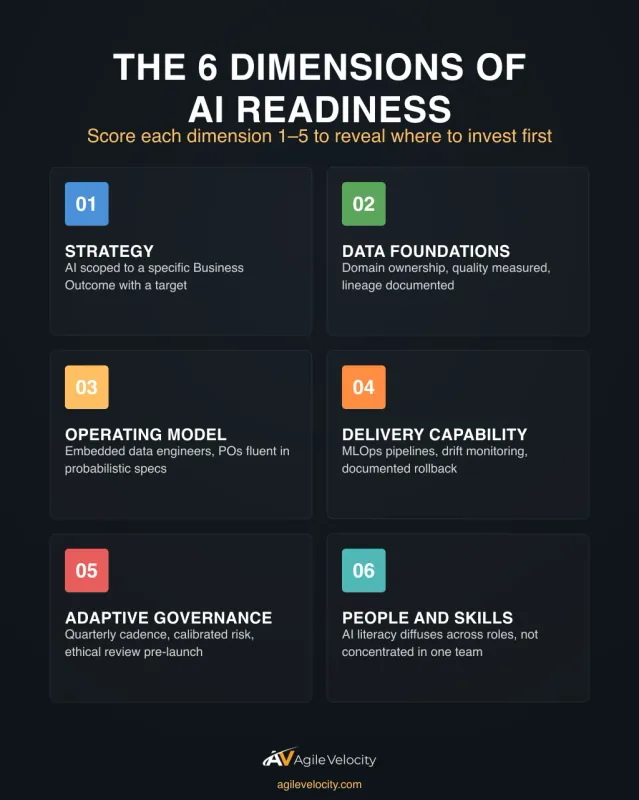

- Score six dimensions: strategy, data foundations, operating model, delivery capability, adaptive governance, and people and skills.

- Path to Agility® maps AI readiness onto the same outcomes already being measured. No separate scorecard, no competing roadmap.

What AI Readiness Means for an Agile Organization

AI readiness is the honest answer to a single leadership question: if the board funded a material AI initiative tomorrow, would your teams be able to deliver value from it within the next two planning intervals? AI initiatives fail at roughly twice the rate of non-AI IT work. RAND's August 2024 study traces the leading root causes to unclear problem framing and gaps in data, the two failure modes a readiness assessment is designed to surface before budget is committed.

For an organization still operating in waterfall patterns, that question exposes deep gaps: unclear product strategy, silo'd teams, annual budget cycles, no shared data. For an organization already practicing agile, the gaps are different and often subtler. The cross-functional teams exist; the short cycles exist; the feedback loops exist. What usually doesn't exist yet is data at a granularity models can consume, ML-specific delivery practices, and governance that can evolve every quarter.

A real AI readiness assessment for an agile organization is not a checklist. It's a score across six dimensions, mapped to the Business Outcomes the organization is already trying to move. If AI readiness doesn't connect to those outcomes, the assessment is theater.

The Six Dimensions of AI Readiness (Through an Agile Lens)

These six dimensions are the ones that consistently separate agile organizations that successfully deliver AI value from agile organizations that stall after the first proof of concept. The named pillars overlap with what Microsoft, Cisco, and Gartner publish, but the definitions are different because the operating context is different.

1. Strategy and Business Outcomes

Does the organization have a clear picture of which Business Outcomes AI is expected to move, and by how much? "Use AI" is not a strategy. "Reduce cycle time on claims processing by 30% through AI-assisted triage" is.

Signals of readiness: AI initiatives are scoped as experiments against specific Business Outcomes (Speed, Quality, Predictability, Customer Satisfaction), with baseline measurements agreed upfront. Signals of a gap: AI is scoped as a technology project with no outcome target, or the target is a vague "efficiency" claim with no denominator.

2. Data Foundations

This is where most agile organizations discover the gap. Teams are good at shipping features; they're often not organized to treat data as a product. Gartner's February 2025 research predicts that through 2026, organizations will abandon 60% of AI projects unsupported by AI-ready data.

Signals of readiness: clear data ownership per domain, data quality measured and reported, feature stores or equivalent for ML-relevant data, documented data lineage for the systems AI will touch. Signals of a gap: data ownership is fuzzy across teams, data quality is an unstaffed tech-debt backlog, models would need to be trained on data no one trusts.

3. Operating Model and Team Structures

Cross-functional teams are table stakes for agile, but AI adds two new roles most teams don't have yet: a data engineer who sits inside the team, and a product owner who's fluent in probabilistic outcomes.

Signals of readiness: at least one team runs with an embedded data/ML engineer, product owners can articulate the difference between a deterministic feature and a model with 82% accuracy, and the team has a clear customer for the model's output. Signals of a gap: ML work is brokered through a central data science team with their own backlog, product owners define AI features like web forms with no tolerance discussion, "the AI team" is a separate silo.

4. Delivery Capability (DevOps and MLOps Maturity)

Shipping a model to production is not the same as shipping a feature to production. You need versioning for models and data, continuous training pipelines, drift monitoring, and rollback paths. Strong DevOps practices are the scaffolding MLOps sits on top of. Organizations without the first will struggle with the second.

Signals of readiness: CI/CD extends to model artifacts, model performance is monitored in production, there's a playbook for rollback when a model starts drifting. Signals of a gap: models are hand-deployed by data scientists, "it worked in the notebook" counts as done, nobody owns the model once it's live.

5. Adaptive Governance

Traditional AI governance is a one-time sign-off: a risk committee reviews the system, approves it, and rarely revisits. That model breaks the moment a model is retrained, which for any real AI system is constantly. Agile organizations need governance that evolves at the same cadence as the product.

Signals of readiness: governance reviews are scheduled at the same rhythm as quarterly planning, model risk categories are documented, there's a clear escalation path when a model's behavior drifts outside acceptable bounds, ethical review happens before a model ships, not after complaints. Signals of a gap: a committee approved AI policy once in 2024 and hasn't revisited it, risk categories are binary ("approved" or "not"), models change materially between governance touchpoints with no process.

6. People and Skills

AI readiness is not mostly about data scientists. It's about whether the existing workforce knows how to work with AI-augmented tools and decisions. Scrum masters need to know how to facilitate conversations where models are one voice among many. Product owners need to know how to write acceptance criteria for probabilistic systems. Engineers need enough ML literacy to debug a system that includes a model.

Signals of readiness: at least 25% of practitioners have completed structured AI literacy training, there's a visible upskilling path for people in agile roles, hiring for cross-functional teams includes at least basic AI-fluency screening. Signals of a gap: AI skills are concentrated in a small team and don't diffuse, training is ad-hoc or optional, role descriptions for scrum masters and product owners haven't been updated since before generative AI.

Maturity Levels for AI Readiness

Each dimension can be scored at one of five maturity levels. The same scale applies across all six dimensions, which keeps the assessment comparable over time.

| Level | What It Looks Like | Typical Leadership Action |

|---|---|---|

| 1 — Unaware | No dimension-specific practice, or the practice exists in name only | Set a baseline; measure where you are before targeting where you want to be |

| 2 — Exploring | One or two teams experimenting, no organizational pattern yet | Protect the experiments, document what's working, avoid premature scaling |

| 3 — Established | Practice exists across multiple teams, guided by shared principles | Invest in the connective tissue: shared platforms, cross-team standards |

| 4 — Scaled | Practice is the default across the delivery system, not an exception | Optimize: reduce variance, codify what works, retire what doesn't |

| 5 — Embedded | Practice adapts continuously; teams improve it without being asked | Guard against regression when leadership or structure changes |

A typical agile organization starting a serious AI initiative scores 3–4 on Operating Model and Delivery Capability (the agile head start pays off) but scores 1–2 on Data Foundations and Adaptive Governance (the gaps the legacy agile setup didn't address). That pattern tells you where to invest first.

How Agile Maturity Maps to AI Readiness

Agile maturity isn't a prerequisite for AI readiness, but it's a lever, and the lever's direction isn't always positive. Three common patterns:

Pattern A: Agile maturity accelerates AI readiness. Organizations with strong cross-functional teams, short cycles, and a working product-owner role move faster on dimensions 1, 3, and 6 (strategy, operating model, people). They already know how to scope outcome-first initiatives and how to evolve a product based on what the market shows them.

Pattern B: Agile maturity hides AI readiness gaps. Organizations that look agile on the surface but haven't moved the data or governance layers discover the comfortable practices don't carry over. The stand-ups and retros continue, but the data scientist embedded on the team can't ship because the data pipeline takes four weeks to add a new data field, meaning the data scientist asks for a new attribute (say, a customer's last support contact) and waits a month before it shows up in the dataset they can model on. This is the same dynamic that causes agile transformations to stall after initial wins: the practices looked adopted but never reached the operating layer.

Pattern C: Agile maturity is irrelevant if AI governance isn't addressed. The most mature agile organization with excellent DevOps and strong product ownership can still stall on AI if the governance body still operates on annual review cycles. This is the single most common reason AI programs stall in otherwise-capable organizations.

If your organization fits Pattern B or C, the AI readiness assessment is where the misalignment becomes visible. That's a good thing: it gives leadership something specific to work on instead of a vague sense that "the AI thing isn't going well."

The Assessment Framework: Questions Leaders Should Score

The fastest way to start is to score each dimension on the 1–5 scale above using a short list of concrete questions. These aren't exhaustive; they're the questions that consistently separate readiness from the absence of readiness.

| Dimension | Three Questions to Score |

|---|---|

| Strategy | Which Business Outcome does each AI initiative move? What's the baseline and target? Who accepts the outcome when it's delivered? |

| Data Foundations | Who owns each relevant data domain? What's the documented data quality? Can a new ML feature get clean data in under two weeks? |

| Operating Model | Do ML-relevant teams have embedded data engineering? Are product owners fluent in probabilistic acceptance criteria? Is the customer of every model clearly named? |

| Delivery Capability | Does CI/CD extend to model artifacts? Is drift monitored in production? Is there a documented rollback path? |

| Adaptive Governance | How often does the governance body meet? Are model risk categories documented and calibrated? Does ethical review happen before or after launch? |

| People and Skills | What percent of practitioners have completed AI literacy training? Is there a visible upskilling path? Does hiring include AI-fluency screening? |

Score each question 1–5, take the lowest score per dimension as the dimension-level rating (a dimension is only as strong as its weakest question), and keep the numbers visible at the leadership level the same way delivery metrics are kept visible.

Track Your Transformation in Real Time

Path to Agility Navigator maps your teams against 100 capabilities and shows exactly where to invest for the outcomes you need.

Common Readiness Gaps in Agile Organizations

We see the same five gaps repeatedly when agile organizations start a material AI initiative. If two or more of these are true in your organization, the assessment is likely to return mid-range scores that mask a real delivery risk.

Gap 1: Data ownership is unstaffed. Teams assume data is "someone's job" and discover during the first AI initiative that the domain has no named owner, no quality metric, and no way to add a new data field to the warehouse in less than a quarter.

Gap 2: Product owners treat AI features like web features. Acceptance criteria read like deterministic requirements ("system shall classify the document"), with no discussion of accuracy thresholds, false-positive tolerance, or what happens when the model is uncertain.

Gap 3: MLOps is a slide, not a practice. The leadership deck mentions continuous training and model monitoring, but the actual workflow is still a data scientist sending a pickle file to a DevOps engineer at the end of a quarter.

Gap 4: Governance lives on a different clock. The governance body reviews AI decisions annually. The models get retrained monthly. The calendar mismatch means either the governance body is overruled or the models can't ship on time.

Gap 5: AI literacy is concentrated. A small team has real AI skills; the other 90% of practitioners have surface-level familiarity at best. When the AI work needs to scale, the knowledge doesn't.

Each of these gaps is fixable, but all of them require leadership action, not individual-team heroics. The practices teams adopt during transformation don't sustain themselves without explicit reinforcement; AI readiness gaps are no different.

How Path to Agility® Integrates AI Readiness

AI readiness doesn't need a separate scorecard if the organization already uses an outcome-driven approach. The Path to Agility® approach is built around 9 Business Outcomes, 26 Agile Outcomes, 100 Capabilities, and 400+ Practices. AI readiness maps onto that structure: it's a set of additional capabilities and practices that support the same outcomes already being measured.

- Speed picks up capabilities around MLOps maturity and data pipeline velocity

- Quality picks up capabilities around model drift monitoring and adaptive governance

- Predictability picks up capabilities around probabilistic acceptance criteria and model performance SLAs

- Customer Satisfaction picks up capabilities around explainability and ethical review

- Employee Engagement picks up capabilities around AI literacy and role evolution

Leaders don't need a separate AI readiness program. They need the AI-specific capabilities added to the existing Path to Agility® approach, with the same focus on the practices that will move outcomes rather than on adopting every AI tool on the market.

Our Path to Agility® Navigator is the diagnostic and reporting tool that answers the questions leaders care about: are we getting business benefits from our AI efforts, and which measures are being impacted? When AI capabilities are added to the capability library, the assessment works the same way: score the capability, see which Business Outcome it supports, track the movement over time.

Frequently Asked Questions

What's the difference between AI readiness and AI maturity?

Readiness measures whether you can start successfully. Maturity measures how well the practice runs once it's established. An organization can be ready (score 3 or higher on all six dimensions) without being mature on any of them. The readiness assessment tells you whether to start; the maturity assessment tells you how well you're doing once you have.

Do agile organizations have an advantage when adopting AI?

Yes, but a narrower one than leaders usually assume. The agile advantages (cross-functional teams, short cycles, product ownership) accelerate three of the six dimensions (strategy, operating model, people). The other three (data foundations, delivery capability, adaptive governance) are new work that agile alone doesn't provide. Organizations that only lean on their agile maturity stall at 40-60% of AI potential.

How often should an AI readiness assessment be run?

Quarterly for the first year of any serious AI initiative, then semi-annually once the organization has reached Level 3 on at least four of the six dimensions. The readiness scores move faster than traditional maturity scores because the underlying technology moves faster. A score from 12 months ago is usually already out of date.

What's the single biggest AI readiness gap in agile organizations?

Adaptive governance. Most agile organizations have working product ownership, reasonable data practices, and some form of DevOps, so those dimensions start at 2-3. Governance, however, often starts at 1 because the existing model (annual review, binary approval) simply doesn't fit a system that changes continuously. Closing this gap typically requires the most senior-level intervention.

Can we run this assessment ourselves or do we need an outside assessor?

The six dimensions and the 1-5 scale are usable by any competent leadership team running the assessment on itself. The value of an outside assessor is calibration: teams often score themselves a full level higher than an external assessor would. If the first self-assessment shows 3-4 across the board, that's usually a signal to bring in external eyes before committing to a multi-quarter AI program.

The Bottom Line

AI readiness for an agile organization isn't a generic pillar scorecard. It's a six-dimension assessment that builds on the agile maturity already in place, exposes the specific gaps legacy agile didn't address, and maps cleanly onto the Business Outcomes the organization is already measuring. The organizations that succeed with AI won't be the ones that ran a separate "AI transformation"; they'll be the ones that added AI readiness to the transformation approach they're already running.

If your organization is considering a material AI initiative, the first honest act is a readiness score. Our Organizational Health Check scores your organization across the 9 Business Outcomes in about four minutes and is the natural starting point for the AI-specific layer to sit on top of.

Facing These Challenges First-Hand?

We've guided 100+ organizations through transformation. Let's talk about what's happening with yours.

Start a Conversation